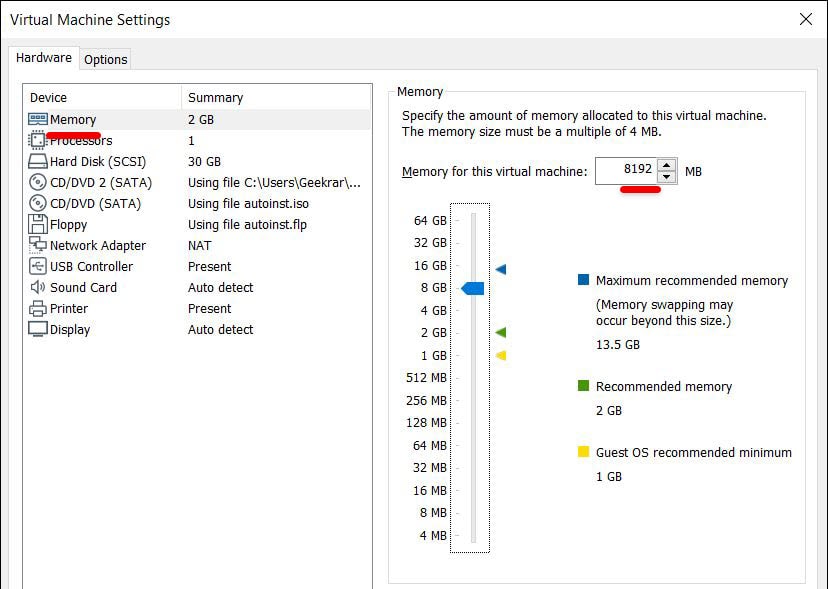

to 50 or higher the query will reach the memory limit also with the instance limit. we used 30 call and that worked fine, but if we increase the number of UDF calls For example, if your query contains too many UDF calls. However, there exist also cases where the instance limit can't help anymore. This query works fine for the 30 UDF calls, which failed before and is much faster than the Python UDF it's also way faster than python for this simple taskĬREATE OR REPLACE lua SCALAR SCRIPT oom_udf(input_value number) confirm the out-of-memory issue doesn't happen with lua This query works fine for the 30 UDF calls, which failed before I have also had it pop up and say that it has run out of memory when it hits 800+mb. need to open the schema again after re-connectĬREATE OR REPLACE PYTHON3 SCALAR SCRIPT oom_udf(input_value INTEGER) RETURNS INTEGER AS The memory usage of DataGrip is in between 600mb and 1gb for me. This query will end with an out-of-memory failure of the query such that UDF call with some input don't get optimized away) this does 30 calls of the UDF (UDFs are treated as function with side-effect, Select oom_udf( 1), oom_udf( 2), oom_udf( 3) Ĭreate or replace table oom_table as select 1 as c0 from values between 1 and 1e5 - 100.000 rows works well for low number of calls on a small data set: confirm version is 7.1 with at least 3 GB DB RAMĬREATE OR REPLACE PYTHON3 SCALAR SCRIPT nr_of_cores() RETURNS INTEGER ASĬREATE OR REPLACE PYTHON3 SCALAR SCRIPT oom_udf (input_value INTEGER) To specify a limit, you can add the option perNodeAndCallInstanceLimit to the UDF as in the following example: Too many UDF calls in a single query, when the summed up main memory consumption for a single instance per Call already would break the memory limit.A single instance of the UDF already consumes too much main memory.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed